Apache Spark vs MapReduce: A Detailed Comparison

Knowledge Hut

MAY 2, 2024

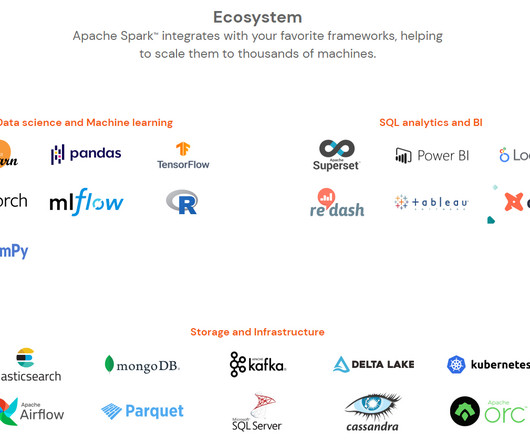

MapReduce has been there for a little longer after being developed in 2006 and gaining industry acceptance during the initial years. MapReduce is written in Java and the APIs are a bit complex to code for new programmers, so there is a steep learning curve involved. Spark supports most data formats like parquet, Avro, ORC, JSON, etc.

Let's personalize your content