Streaming Data from the Universe with Apache Kafka

Confluent

JUNE 13, 2019

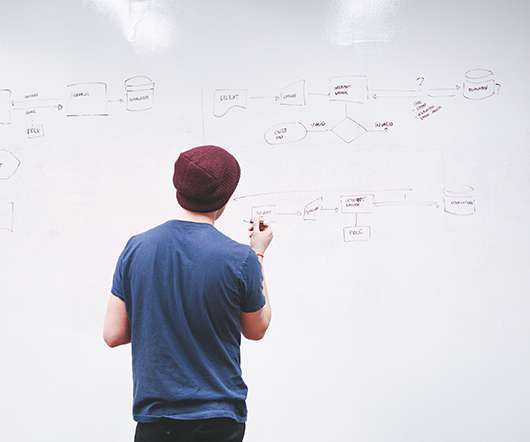

This data pipeline is a great example of a use case for Apache Kafka ®. The data processing pipeline characterizes these objects, deriving key parameters such as brightness, color, ellipticity, and coordinate location, and broadcasts this information in alert packets. The case for Apache Kafka. Astronomy in real time.

Let's personalize your content