Simplifying Data Architecture and Security to Accelerate Value

Snowflake

NOVEMBER 11, 2024

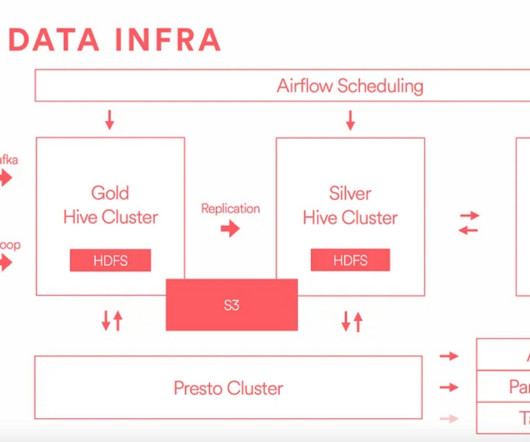

This reduces the overall complexity of getting streaming data ready to use: Simply create external access integration with your existing Kafka solution. SnowConvert is an easy-to-use code conversion tool that accelerates legacy relational database management system (RDBMS) migrations to Snowflake.

Let's personalize your content