The Race For Data Quality in a Medallion Architecture

DataKitchen

NOVEMBER 5, 2024

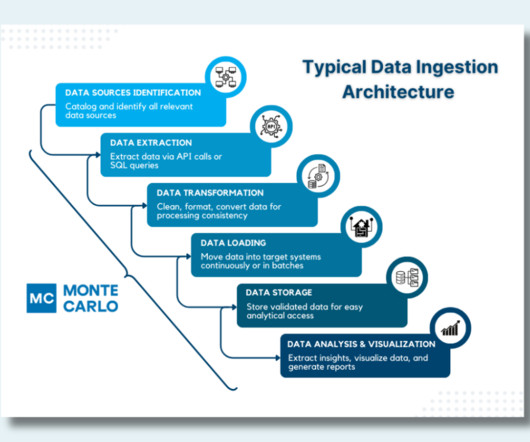

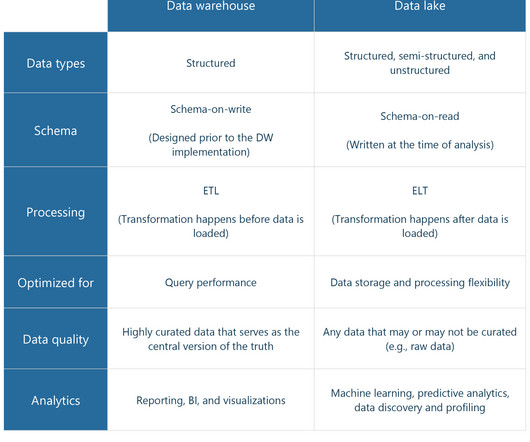

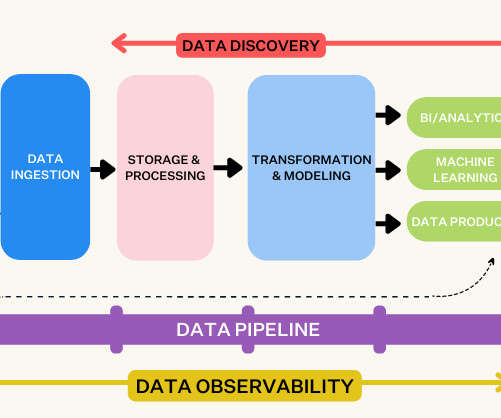

It sounds great, but how do you prove the data is correct at each layer? How do you ensure data quality in every layer ? Bronze, Silver, and Gold – The Data Architecture Olympics? The Bronze layer is the initial landing zone for all incoming raw data, capturing it in its unprocessed, original form.

Let's personalize your content