Stitching Together Enterprise Analytics With Microsoft Fabric

Data Engineering Podcast

JUNE 23, 2024

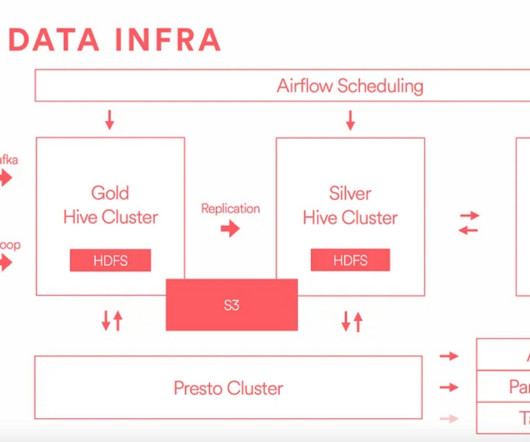

Announcements Hello and welcome to the Data Engineering Podcast, the show about modern data management Data lakes are notoriously complex. Data lakes in various forms have been gaining significant popularity as a unified interface to an organization's analytics. When is Fabric the wrong choice?

Let's personalize your content