Implementing the Netflix Media Database

Netflix Tech

DECEMBER 14, 2018

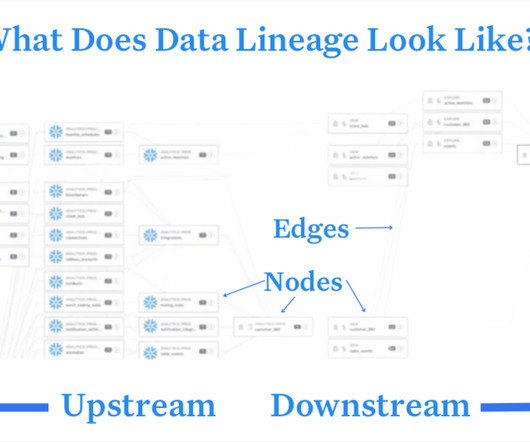

A fundamental requirement for any lasting data system is that it should scale along with the growth of the business applications it wishes to serve. NMDB is built to be a highly scalable, multi-tenant, media metadata system that can serve a high volume of write/read throughput as well as support near real-time queries.

Let's personalize your content