How I Optimized Large-Scale Data Ingestion

databricks

SEPTEMBER 6, 2024

Explore being a PM intern at a technical powerhouse like Databricks, learning how to advance data ingestion tools to drive efficiency.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

databricks

SEPTEMBER 6, 2024

Explore being a PM intern at a technical powerhouse like Databricks, learning how to advance data ingestion tools to drive efficiency.

Hevo

JUNE 20, 2024

As data collection within organizations proliferates rapidly, developers are automating data movement through Data Ingestion techniques. However, implementing complex Data Ingestion techniques can be tedious and time-consuming for developers.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

The AI Superhero Approach to Product Management

Driving Responsible Innovation: How to Navigate AI Governance & Data Privacy

The Ultimate Guide To Data-Driven Construction: Optimize Projects, Reduce Risks, & Boost Innovation

Building Your BI Strategy: How to Choose a Solution That Scales and Delivers

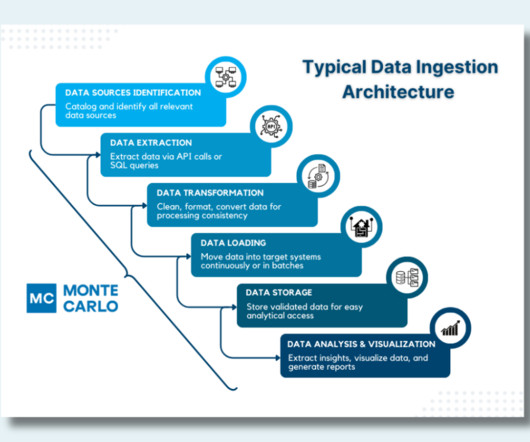

Monte Carlo

MAY 28, 2024

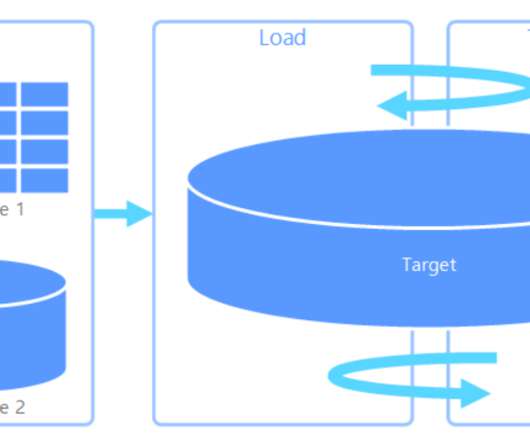

A data ingestion architecture is the technical blueprint that ensures that every pulse of your organization’s data ecosystem brings critical information to where it’s needed most. Ensuring all relevant data inputs are accounted for is crucial for a comprehensive ingestion process.

The AI Superhero Approach to Product Management

Driving Responsible Innovation: How to Navigate AI Governance & Data Privacy

The Ultimate Guide To Data-Driven Construction: Optimize Projects, Reduce Risks, & Boost Innovation

Building Your BI Strategy: How to Choose a Solution That Scales and Delivers

Hevo

APRIL 26, 2024

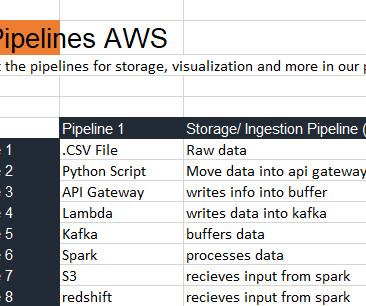

Managing vast data volumes is a necessity for organizations in the current data-driven economy. To accommodate lengthy processes on such data, companies turn toward Data Pipelines which tend to automate the work of extracting data, transforming it and storing it in the desired location.

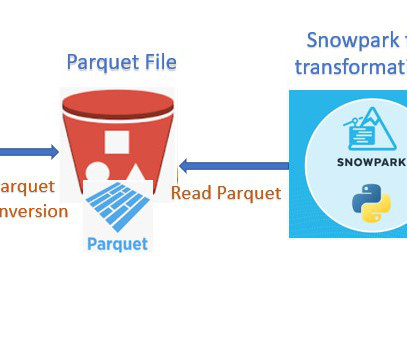

Cloudyard

JUNE 6, 2023

Parquet, columnar storage file format saves both time and space when it comes to big data processing. COPY the data from external stage to Snowflake table created in previous step. Read the data from the table and filtered only Active status records in dataframe. Load the dataframe into Snowflake in the new table.

Snowflake

JANUARY 26, 2023

Accessing data from the manufacturing shop floor is one of the key topics of interest with the majority of cloud platform vendors due to the pace of Industry 4.0 practices is the ability to collect and analyze vast amounts of data, allowing for improved efficiency, accuracy, and decision-making. Industry 4.0, cannot be overstated.

Hevo

MARCH 28, 2023

As businesses continue to generate and collect large amounts of data, the need for automated data ingestion becomes increasingly critical. The process of ingesting and processing vast amounts of information can be overwhelming.

Hevo

MARCH 28, 2023

As businesses continue to generate and collect large amounts of data, the need for automated data ingestion becomes increasingly critical. The process of ingesting and processing vast amounts of information can be overwhelming.

KDnuggets

SEPTEMBER 11, 2024

Learn how to create a data science pipeline with a complete structure.

Hevo

JULY 5, 2024

Managing data ingestion from Azure Blob Storage to Snowflake can be cumbersome. But what if you could automate the process, ensure data integrity, and leverage real-time analytics? Manual processes lead to inefficiencies and potential errors while also increasing operational overhead.

Databand.ai

JULY 19, 2023

Complete Guide to Data Ingestion: Types, Process, and Best Practices Helen Soloveichik July 19, 2023 What Is Data Ingestion? Data Ingestion is the process of obtaining, importing, and processing data for later use or storage in a database. In this article: Why Is Data Ingestion Important?

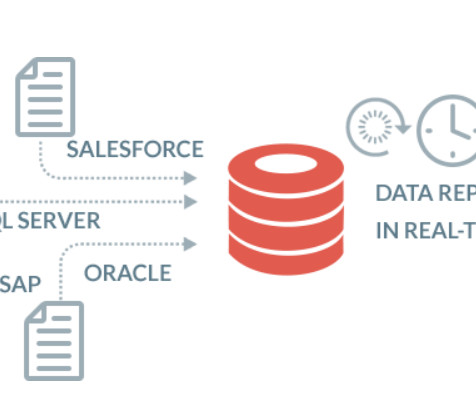

Knowledge Hut

JULY 3, 2023

In today's fast-paced and data-driven world, users increasingly depend on real-time intuition to get an aggressive side and define a plan of action. This is where real-time data ingestion comes into the picture. Data is collected from various sources such as social media feeds, website interactions, log files and processing.

databricks

MAY 23, 2024

We're excited to announce native support in Databricks for ingesting XML data. XML is a popular file format for representing complex data.

KDnuggets

APRIL 6, 2022

Learn tricks on importing various data formats using Pandas with a few lines of code. We will be learning to import SQL databases, Excel sheets, HTML tables, CSV, and JSON files with examples.

Hevo

APRIL 19, 2024

A fundamental requirement for any data-driven organization is to have a streamlined data delivery mechanism. With organizations collecting data at a rate like never before, devising data pipelines for adequate flow of information for analytics and Machine Learning tasks becomes crucial for businesses.

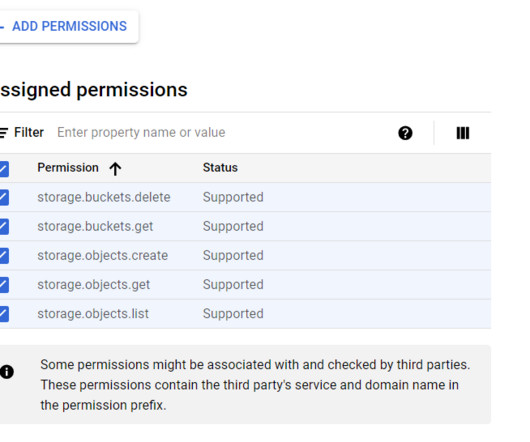

Confluent

JANUARY 22, 2024

The new fully managed BigQuery Sink V2 connector for Confluent Cloud offers streamlined data ingestion and cost-efficiency. Learn about the Google-recommended Storage Write API and OAuth 2.0 support.

Hevo

JULY 17, 2024

Every data-centric organization uses a data lake, warehouse, or both data architectures to meet its data needs. Data Lakes bring flexibility and accessibility, whereas warehouses bring structure and performance to the data architecture.

Hevo

JUNE 20, 2024

The surge in Big Data and Cloud Computing has created a huge demand for real-time Data Analytics. Companies rely on complex ETL (Extract Transform and Load) Pipelines that collect data from sources in the raw form and deliver it to a storage destination in a form suitable for analysis.

Hepta Analytics

FEBRUARY 14, 2022

DE Zoomcamp 2.2.1 – Introduction to Workflow Orchestration Following last weeks blog , we move to data ingestion. We already had a script that downloaded a csv file, processed the data and pushed the data to postgres database. This week, we got to think about our data ingestion design.

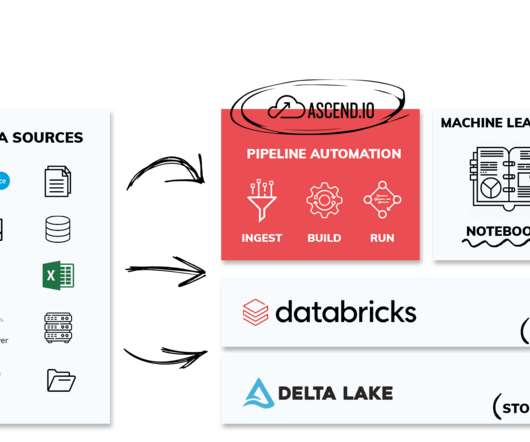

Ascend.io

DECEMBER 19, 2022

We hope the real-time demonstrations of Ascend automating data pipelines were a real treat—a long with the special edition T-Shirt designed specifically for the show (picture of our founder and CEO rocking the t-shirt below). With this approach, we’re able to augment our uniquely beautiful and intuitive visualization of data pipelines.

KDnuggets

JULY 29, 2024

Learn to build the end-to-end data science pipelines from data ingestion to data visualization using Pandas pipe method.

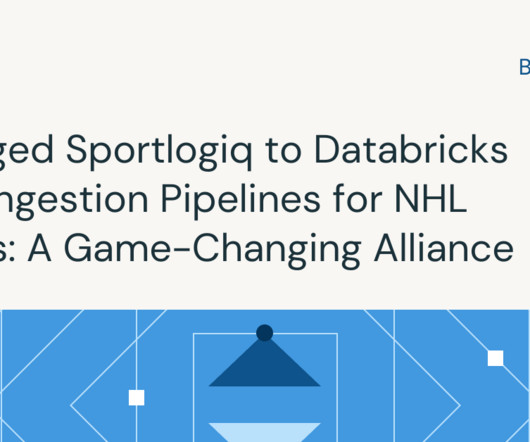

databricks

MARCH 29, 2024

Overview In the competitive world of professional hockey, NHL teams are always seeking to optimize their performance. Advanced analytics has become increasingly important.

Rockset

AUGUST 4, 2021

Organizations that depend on data for their success and survival need robust, scalable data architecture, typically employing a data warehouse for analytics needs. Snowflake is often their cloud-native data warehouse of choice. Data ingestion must be performant to handle large amounts of data.

Analytics Vidhya

FEBRUARY 20, 2023

Introduction Azure data factory (ADF) is a cloud-based data ingestion and ETL (Extract, Transform, Load) tool. The data-driven workflow in ADF orchestrates and automates data movement and data transformation.

DataKitchen

MAY 10, 2024

The Five Use Cases in Data Observability: Effective Data Anomaly Monitoring (#2) Introduction Ensuring the accuracy and timeliness of data ingestion is a cornerstone for maintaining the integrity of data systems. This process is critical as it ensures data quality from the onset.

Analytics Vidhya

MARCH 7, 2023

Introduction Apache Flume is a tool/service/data ingestion mechanism for gathering, aggregating, and delivering huge amounts of streaming data from diverse sources, such as log files, events, and so on, to centralized data storage. Flume is a tool that is very dependable, distributed, and customizable.

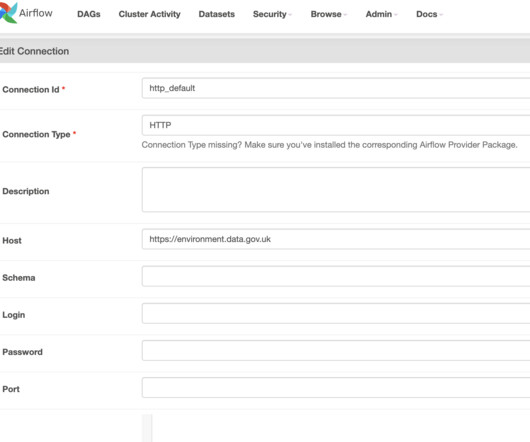

Hevo

SEPTEMBER 3, 2024

In this tutorial, you’ll learn how to create an Apache Airflow MongoDB connection to extract data from a REST API that records flood data daily, transform the data, and load it into a MongoDB database. Why […]

Data Engineering Weekly

JULY 7, 2024

Experience Enterprise-Grade Apache Airflow Astro augments Airflow with enterprise-grade features to enhance productivity, meet scalability and availability demands across your data pipelines, and more. Hudi seems to be a de facto choice for CDC data lake features. Notion migrated the insert heavy workload from Snowflake to Hudi.

Snowflake

OCTOBER 3, 2023

We are excited to announce the availability of data pipelines replication, which is now in public preview. In the event of an outage, this powerful new capability lets you easily replicate and failover your entire data ingestion and transformations pipelines in Snowflake with minimal downtime.

Snowflake

APRIL 9, 2024

Outreach data, available via Snowflake Marketplace , contains a huge amount of useful information including lead scoring, topics that are resonating with audiences, where sales reps spend the most time, which accounts are open to conversations and more. Each of these sources may store data differently. But that’s not all.

Snowflake

MARCH 14, 2024

As a cohesive ERP solution, SAP is often one of the largest data resources in an organization, containing everything from financial and transactional data to master information about customers, vendors, materials, facilities, planning and even HR. What’s the challenge with unlocking SAP data?

Hevo

JULY 10, 2024

While you can use Snowpipe for straightforward and low-complexity data ingestion into Snowflake, Snowpipe alternatives, like Kafka, Spark, and COPY, provide enhanced capabilities for real-time data processing, scalability, flexibility in data handling, and broader ecosystem integration.

DataKitchen

MAY 10, 2024

Harnessing Data Observability Across Five Key Use Cases The ability to monitor, validate, and ensure data accuracy across its lifecycle is not just a luxury—it’s a necessity. Data Evaluation Before new data sets are introduced into production environments, they must be thoroughly evaluated and cleaned.

Towards Data Science

JUNE 12, 2024

Python tricks and techniques for data ingestion, validation, processing, and testing: a practical walkthrough Continue reading on Towards Data Science »

Christophe Blefari

JANUARY 20, 2024

Learn data engineering, all the references ( credits ) This is a special edition of the Data News. But right now I'm in holidays finishing a hiking week in Corsica 🥾 So I wrote this special edition about: how to learn data engineering in 2024. The idea is to create a living reference about Data Engineering.

Knowledge Hut

APRIL 23, 2024

In the modern data-driven landscape, organizations continuously explore avenues to derive meaningful insights from the immense volume of information available. Two popular approaches that have emerged in recent years are data warehouse and big data. Data warehousing offers several advantages.

Team Data Science

MAY 10, 2020

I can now begin drafting my data ingestion/ streaming pipeline without being overwhelmed. With careful consideration and learning about your market, the choices you need to make become narrower and more clear. I'll use Python and Spark because they are the top 2 requested skills in Toronto.

Data Engineering Podcast

NOVEMBER 6, 2022

Summary Despite the best efforts of data engineers, data is as messy as the real world. Entity resolution and fuzzy matching are powerful utilities for cleaning up data from disconnected sources, but it has typically required custom development and training machine learning models.

Christophe Blefari

MARCH 4, 2023

Formula 1 is back (trying to jinx before it happens) (yes there is no link with the data news) ( credits ) Hello you, I hope this new Data News finds you well. But still, in order to make data works we still need to praise other data coworkers that have to do documentation and all the governance burden that no-one wants to do.

KDnuggets

APRIL 29, 2022

Top-rated data science tracks consist of multiple project-based courses covering all aspects of data. It includes an introduction to Python/R, data ingestion & manipulation, data visualization, machine learning, and reporting.

Data Engineering Weekly

APRIL 21, 2024

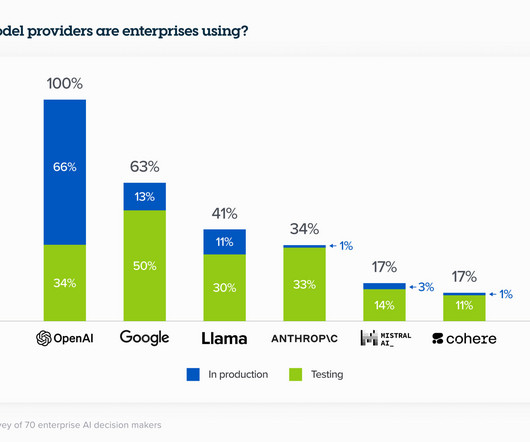

Meta: Introducing Meta Llama 3 - The most capable openly available LLM to date Meta is taking an interesting approach in the growing LLM market with the open source approach and distribution across all the leading cloud providers and data platforms. Counting is the hardest problem in data engineering.

Data Engineering Weekly

MARCH 24, 2024

link] Kai Waehner: The Data Streaming Landscape 2024 This is a comprehensive overview of the state of the data streaming landscape in 2024. link] Mercado Libre Tech: Data Mesh @ MELI - Building Highways for Thousands of Data Producers Ok, Data Mesh is still alive!!

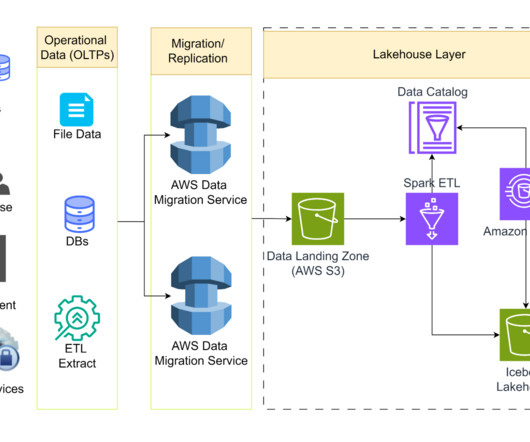

databricks

MAY 31, 2023

Data ingestion into the Lakehouse can be a bottleneck for many organizations, but with Databricks, you can quickly and easily ingest data of.

Edureka

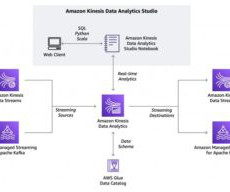

AUGUST 23, 2024

Introduction Data analytics is imperative for business success. AI-driven data insights make it possible to improve decision-making. These analytic models can work on processed data sets. The accuracy of decisions improves dramatically once you can use live data in real-time. What can I do with Kinesis Data Streams?

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content