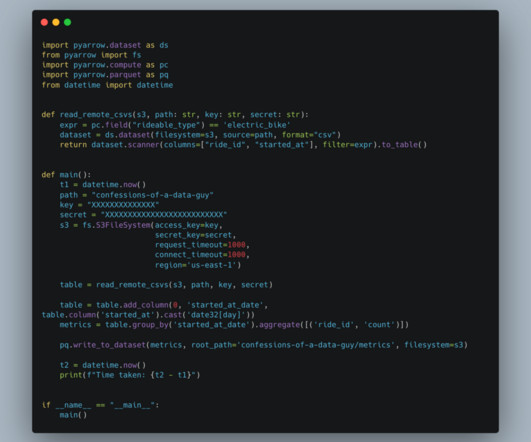

PyArrow vs Polars (vs DuckDB) for Data Pipelines.

Confessions of a Data Guy

JULY 24, 2024

We all keep hearing about Arrow this and Arrow that … seems every new tool built today for Data Engineering seems to be at least partly based on Arrow’s in-memory format. So, […] The post PyArrow vs Polars (vs DuckDB) for Data Pipelines. appeared first on Confessions of a Data Guy.

Let's personalize your content