The Mistake Every Data Scientist Has Made at Least Once

KDnuggets

SEPTEMBER 22, 2022

How to increase your chances of avoiding the mistake.

KDnuggets

SEPTEMBER 22, 2022

How to increase your chances of avoiding the mistake.

Marc Lamberti

SEPTEMBER 18, 2022

Airflow Taskflow is a new way of writing DAGs at ease. As you will see, you need to write fewer lines than before to obtain the same DAG. That helps to make DAGs easier to build, read, and maintain. The Taskflow API has three main aspects: XCOM Args, Decorator, and XCOM backends. In this tutorial, you will learn what the Taskflow API is, why it is crucial for you, and how to create your DAGs.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Confluent

SEPTEMBER 20, 2022

Microservices have numerous benefits, but data silos are incredibly challenging. Learn how Kafka Connect and CDC provide real-time database synchronization, bridging data silos between all microservice applications.

Cloudera

SEPTEMBER 23, 2022

In a recent blog, Cloudera Chief Technology Officer Ram Venkatesh described the evolution of a data lakehouse, as well as the benefits of using an open data lakehouse, especially the open Cloudera Data Platform (CDP). If you missed it, you can read up about it here. Modern data lakehouses are typically deployed in the cloud. Cloud computing brings several distinct advantages that are core to the lakehouse value proposition.

Advertisement

In Airflow, DAGs (your data pipelines) support nearly every use case. As these workflows grow in complexity and scale, efficiently identifying and resolving issues becomes a critical skill for every data engineer. This is a comprehensive guide with best practices and examples to debugging Airflow DAGs. You’ll learn how to: Create a standardized process for debugging to quickly diagnose errors in your DAGs Identify common issues with DAGs, tasks, and connections Distinguish between Airflow-relate

KDnuggets

SEPTEMBER 20, 2022

When building and optimizing your classification model, measuring how accurately it predicts your expected outcome is crucial. However, this metric alone is never the entire story, as it can still offer misleading results. That's where these additional performance evaluations come into play to help tease out more meaning from your model.

Data Engineering Podcast

SEPTEMBER 18, 2022

Summary In order to improve efficiency in any business you must first know what is contributing to wasted effort or missed opportunities. When your business operates across multiple locations it becomes even more challenging and important to gain insights into how work is being done. In this episode Tommy Yionoulis shares his experiences working in the service and hospitality industries and how that led him to found OpsAnalitica, a platform for collecting and analyzing metrics on multi location

Data Engineering Digest brings together the best content for data engineering professionals from the widest variety of industry thought leaders.

Cloudera

SEPTEMBER 22, 2022

Insurance carriers are always looking to improve operational efficiency. We’ve previously highlighted opportunities to improve digital claims processing with data and AI. In this post, I’ll explore opportunities to enhance risk assessment and underwriting, especially in personal lines and small and medium-sized enterprises. Underwriting is an area that can yield improvements by applying the old saying “work smarter, not harder.

KDnuggets

SEPTEMBER 22, 2022

Dimensionality reduction techniques are basically a part of the data pre-processing step, performed before training the model.

Data Engineering Podcast

SEPTEMBER 18, 2022

Summary There is a constant tension in business data between growing siloes, and breaking them down. Even when a tool is designed to integrate information as a guard against data isolation, it can easily become a silo of its own, where you have to make a point of using it to seek out information. In order to help distribute critical context about data assets and their status into the locations where work is being done Nicholas Freund co-founded Workstream.

U-Next

SEPTEMBER 23, 2022

What Is Sales Operations? . Sales operations refer to the area of an organization that supports, facilitates, and drives the front-line sales team in order to sell faster, better, and more efficiently. It refers to the unit’s processes, roles, and activities within the sales organization. . The objectives of sales management operations leaders are to maximize the effectiveness of sales teams by enabling them to focus on sales because it enables them to drive business results through th

Speaker: Tamara Fingerlin, Developer Advocate

Apache Airflow® 3.0, the most anticipated Airflow release yet, officially launched this April. As the de facto standard for data orchestration, Airflow is trusted by over 77,000 organizations to power everything from advanced analytics to production AI and MLOps. With the 3.0 release, the top-requested features from the community were delivered, including a revamped UI for easier navigation, stronger security, and greater flexibility to run tasks anywhere at any time.

Cloudera

SEPTEMBER 19, 2022

The identity team at Cloudera has been working to add the System for Cross-domain Identity Management (SCIM) support to Cloudera Data Platform (CDP) and we’re happy to announce the general availability of SCIM on Azure Active Directory! In Part One we discussed: CDP SCIM Support for Active Directory, which discusses the core elements of CDP’s SCIM support for Azure AD.

KDnuggets

SEPTEMBER 22, 2022

Are you ready to learn Excel from the beginning? In this course, you will learn data entry, essential formulas, data visualization, pivot tables, and much more.

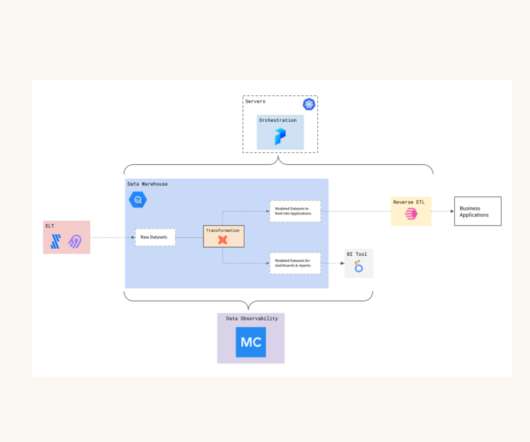

Monte Carlo

SEPTEMBER 22, 2022

Dr. Squatch provides natural products specifically formulated for men who want to feel like a man, and smell like a champion. Making data-driven decisions is critical for the company to “raise the bar” on men’s personal care products according to their VP of Data, IT & Security, Nick Johnson. “Our mission as a data team is to help all of our decision makers across the business–from marketing and product to customer experience and finance–make better decisions that are informed by data,” Nick

U-Next

SEPTEMBER 23, 2022

Introduction . A full-stack Developer mainly focuses on developing web applications which include front-end development, back-end development, and integration with other platforms like mobile apps, desktop applications, etc. You will need good skills and knowledge about different technologies like JavaScript, Ruby on Rails, etc., to become successful in this field, along with good working experience in these technologies before applying yourself as a full-stack developer candidate in India or a

Speaker: Alex Salazar, CEO & Co-Founder @ Arcade | Nate Barbettini, Founding Engineer @ Arcade | Tony Karrer, Founder & CTO @ Aggregage

There’s a lot of noise surrounding the ability of AI agents to connect to your tools, systems and data. But building an AI application into a reliable, secure workflow agent isn’t as simple as plugging in an API. As an engineering leader, it can be challenging to make sense of this evolving landscape, but agent tooling provides such high value that it’s critical we figure out how to move forward.

Cloudera

SEPTEMBER 20, 2022

Introduction. This blog is intended to serve as an ethics sheet for the task of AI-assisted comic book art generation, inspired by “ Ethics Sheets for AI Tasks.” AI-assisted comic book art generation is a task I proposed in a blog post I authored on behalf of my employer, Cloudera. I’m a research engineer by trade and have been involved in software creation in some way or another for most of my professional life.

KDnuggets

SEPTEMBER 19, 2022

Work on machine learning and deep learning portfolio projects to learn new skills and improve your chance of getting hired.

know.bi

SEPTEMBER 20, 2022

What is data testing, and why should you test your data? Apache Hop is a data engineering and data orchestration platform that allows data engineers and data developers to visually design workflows and data pipelines to build robust solutions. However, building data pipelines is just the start. You want to run your workflows and pipelines in production reliably, and you want to make sure your data is processed exactly the way you want it to.

U-Next

SEPTEMBER 22, 2022

Introduction to CNN Architecture . Before we go deeper into the Image Classification of CNN Architecture, let us first look into “ what is CNN architecture? ” CNN or Conventional Neural Network is a set of neural networks that can extract unique features from an image. A perfect example of CNN or Conventional Neural Network is face detection and recognition, as they can easily classify complex features in image data.

Speaker: Andrew Skoog, Founder of MachinistX & President of Hexis Representatives

Manufacturing is evolving, and the right technology can empower—not replace—your workforce. Smart automation and AI-driven software are revolutionizing decision-making, optimizing processes, and improving efficiency. But how do you implement these tools with confidence and ensure they complement human expertise rather than override it? Join industry expert Andrew Skoog as he explores how manufacturers can leverage automation to enhance operations, streamline workflows, and make smarter, data-dri

Cloudera

SEPTEMBER 23, 2022

In a recent blog, Cloudera Chief Technology Officer Ram Venkatesh described the evolution of a data lakehouse, as well as the benefits of using an open data lakehouse, especially the open Cloudera Data Platform (CDP). If you missed it, you can read up about it here. Modern data lakehouses are typically deployed in the cloud. Cloud computing brings several distinct advantages that are core to the lakehouse value proposition.

KDnuggets

SEPTEMBER 22, 2022

This article is for people who don’t know a thing about MLOps or want to refresh their memory.

Elder Research

SEPTEMBER 20, 2022

The post Data-Driven Change: Essential Mindsets appeared first on Elder Research.

U-Next

SEPTEMBER 22, 2022

Introduction . According to Gartner, Inc. , enterprise IT spending on public cloud computing will surpass traditional IT investments in various market segments in 2025. Gartner’s ” cloud shift ” research includes only cloud-compatible IT categories within the markets for application software, infrastructure, business process services, and system infrastructure are included in Gartner’s “cloud shift” research.

Advertisement

With Airflow being the open-source standard for workflow orchestration, knowing how to write Airflow DAGs has become an essential skill for every data engineer. This eBook provides a comprehensive overview of DAG writing features with plenty of example code. You’ll learn how to: Understand the building blocks DAGs, combine them in complex pipelines, and schedule your DAG to run exactly when you want it to Write DAGs that adapt to your data at runtime and set up alerts and notifications Scale you

Rockset

SEPTEMBER 20, 2022

Introduction Cryptocurrencies and NFTs have helped bring blockchain technology to the mainstream over the last few years, driven by the potential for astronomic financial returns. As more users become familiar with blockchain, attention and resources have started to shift towards other use cases for decentralized applications, or dApps. dApps are built on blockchains and are the use case layer for web3 infrastructure, offering a wide range of services.

KDnuggets

SEPTEMBER 23, 2022

Get some advice from the “older” generation.

Striim

SEPTEMBER 20, 2022

Modern-day customers have higher expectations from the brands they interact with. They crave customer experiences that are more timely, targeted, and personalized to their needs. Brands can meet these expectations by integrating real-time analytics into their customer experience. According to a study from Harvard Business Review, 44% of organizations found the adoption of real-time customer analytics to increase their total number of customers and revenue.

Monte Carlo

SEPTEMBER 20, 2022

Data teams spend millions per year tackling the persistent challenges of data downtime. However, it’s often the leanest data teams that feel the sting of poor data quality the most. Here’s how Prefect , Series B startup and creator of the popular data orchestration tool, harnessed the power of data observability to preserve headcount, improve data quality and reduce time to detection and resolution for data incidents.

Speaker: Tamara Fingerlin, Developer Advocate

In this new webinar, Tamara Fingerlin, Developer Advocate, will walk you through many Airflow best practices and advanced features that can help you make your pipelines more manageable, adaptive, and robust. She'll focus on how to write best-in-class Airflow DAGs using the latest Airflow features like dynamic task mapping and data-driven scheduling!

Picnic Engineering

SEPTEMBER 20, 2022

Here at Picnic, we love data. Over the last years, Picnic has grown into a data-driven online supermarket that is active in three countries. By leveraging data and algorithms, we have been able to support the company’s growth while maintaining high service levels. Besides numerous demand forecasting models, we have for example built machine learning models to improve our customer service and increase the efficiency of our trips.

KDnuggets

SEPTEMBER 21, 2022

I have chosen to go through how to build a text-to-speech converter in Python, not only is it simple, but it is also fun and interactive. I will show you two ways you can do it with Python.

Databand.ai

SEPTEMBER 19, 2022

How to create data pipeline and data quality SLA alerts in Databand Helen Soloveichik 2022-09-20 01:49:30 Data engineers often get inundated by alerts from data issues. The last thing an engineer wants to do is get woken up at night for a minor issue, or worse, miss a critical one that requires immediate attention. Databand helps fix this problem by breaking through noisy alerts with focused alerting and routing when a data pipeline and quality issues occur.

Propel Data

SEPTEMBER 19, 2022

In this article, you’ll learn how to implement the OAuth 2.0 client credentials flow with GraphQL using Node.js.

Speaker: Ben Epstein, Stealth Founder & CTO | Tony Karrer, Founder & CTO, Aggregage

When tasked with building a fundamentally new product line with deeper insights than previously achievable for a high-value client, Ben Epstein and his team faced a significant challenge: how to harness LLMs to produce consistent, high-accuracy outputs at scale. In this new session, Ben will share how he and his team engineered a system (based on proven software engineering approaches) that employs reproducible test variations (via temperature 0 and fixed seeds), and enables non-LLM evaluation m

Let's personalize your content